Data transformation is the engine that turns raw data into decisions. But in 2025, teams have a buffet of tools-dbt, Dataform, and Apache Airflow-that each promise to transform your data stack into something reliable, testable, and, dare I say, elegant. This article unpacks how these tools differ, where they overlap, and how to choose the right one for your projects. By the end, you’ll know practical strategies for adoption, common pitfalls to avoid, and how these projects fit into a modern analytics and ML pipeline.

Why data transformation matters (and why the tool choice matters too)

Raw data is messy: missing values, inconsistent schemas, and cryptic codes from third-party systems. Transformation is where you apply business logic, enforce quality checks, and produce clean, consumable datasets for analysts and models. The right transformation tooling accelerates delivery, enforces software engineering practices, and makes collaboration repeatable.

dbt (data build tool) emphasizes SQL-first transformations with version control, tests, and modularity. Dataform was built for cloud data warehouses-especially BigQuery-and offers an integrated environment for building SQL workflows. Apache Airflow is a general-purpose orchestrator that schedules and chains tasks across diverse systems, including transformation jobs.

High-level comparison: dbt, Dataform, and Airflow

Let’s compare them by philosophy and typical use cases:

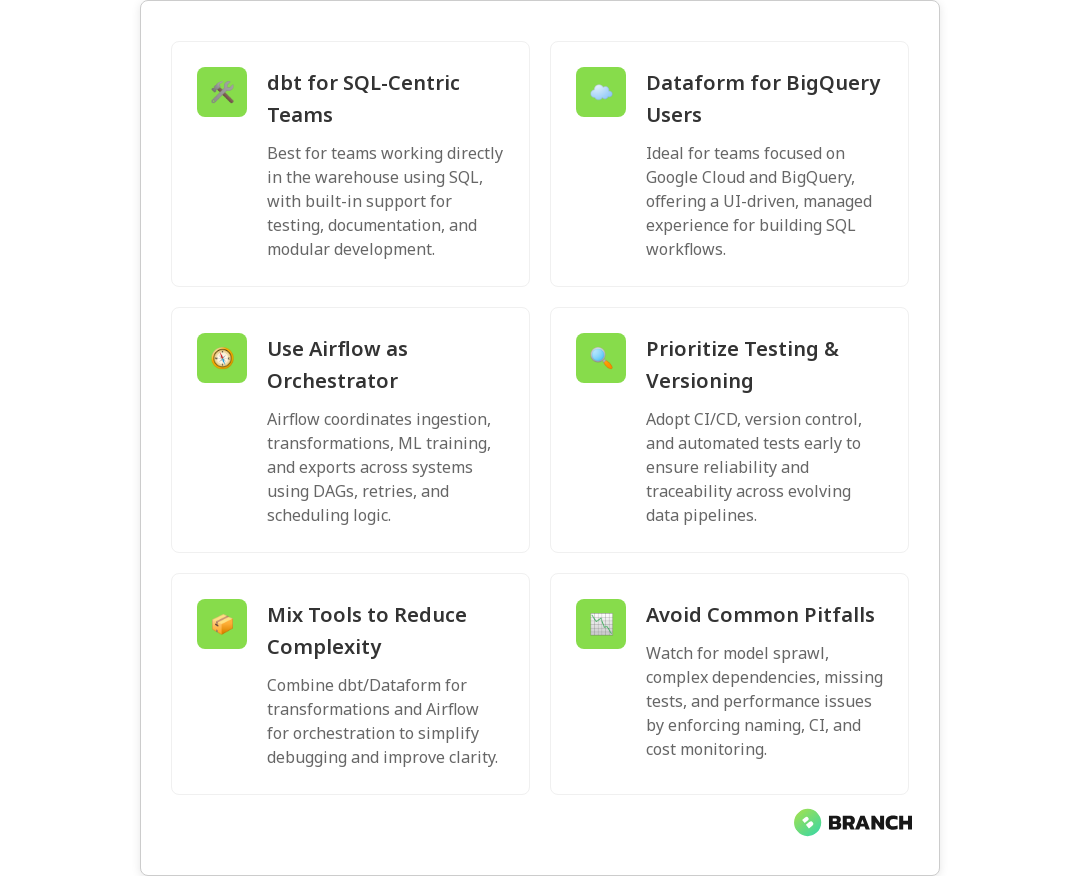

- dbt: Focused on in-warehouse transformations using SQL and modular models. dbt champions software engineering practices like testing, documentation, and reusable macros. It’s ideal when your transformations live primarily in the data warehouse and you want a clear, versioned lineage.

- Dataform: Designed as a managed, warehouse-friendly development environment. It provides a tight BigQuery integration and simplifies building SQL-based pipelines with a GUI and repository-backed workflows. For teams deeply embedded in Google Cloud/BigQuery, Dataform streamlines the developer experience.

- Apache Airflow: A workflow orchestrator, not a transformation engine. Airflow schedules and monitors tasks-transformations, data ingestion, ML training jobs, and more-across heterogeneous systems. Use Airflow when your pipeline spans many systems and needs flexible control flow, retries, and dependency management.

For a practical technical comparison that highlights the developer experience differences between dbt and Dataform, see this dbt vs Dataform comparison.

How teams typically combine these tools

In many modern stacks, these tools are complementary rather than exclusive:

- Use dbt to implement transformations, tests, and documentation inside the warehouse. Its model-centric approach yields clean, version-controlled datasets.

- Use Dataform when you want a streamlined developer experience closely tied to BigQuery, especially if you value an integrated UI and simple deployment.

- Use Airflow to orchestrate the broader flow: trigger ingestion, kick off dbt or Dataform jobs, run ML training, and manage downstream exports.

In short: dbt/Dataform = transformation logic; Airflow = conductor. That conductor can also call transformations built with dbt or Dataform.

Practical strategies for choosing and implementing

Choosing the right approach depends on people, platform, and policy. Here are practical strategies to guide the decision:

1. Start with your warehouse and team skills

If your team is SQL-first and your warehouse supports dbt well (Snowflake, BigQuery, BigLake, Redshift, Databricks SQL), dbt is typically the fastest path to disciplined transformations. If you’re firmly BigQuery and want an integrated UI experience, Dataform can speed onboarding.

2. Use software engineering practices from day one

Whatever tool you pick, version control, CI/CD, code review, and automated testing matter. dbt has built-in testing and documentation features that map naturally to software engineering workflows. Dataform also supports repo-backed development. For orchestration, integrate Airflow tasks into CI so scheduled changes are predictable.

3. Combine tools when it reduces complexity

Don’t try to make a single tool do everything. Use dbt/Dataform to produce reliable datasets, and Airflow to orchestrate and monitor. This makes debugging easier: transformation errors show up in dbt tests, while scheduling issues appear in Airflow logs.

4. Plan for observability and lineage

Choose tools and deployments that expose lineage and metadata. dbt generates a lineage graph and docs site; integrating that with your observability stack reduces mean time to resolution when data consumers complain.

Read more: Data Engineering for AI – a guide to why disciplined pipelines are essential for reliable AI systems.Common challenges and how to avoid them

Even with the right tools, teams hit roadblocks. Here are the predictable ones and how to mitigate them:

- Model sprawl: Over time, hundreds of dbt models can accumulate. Solve this with naming conventions, model folders, and regular cleanup sprints.

- Complex dependencies: If transformations depend on many upstream systems, use Airflow to enforce ordering and retries, and design idempotent tasks.

- Testing gaps: Tests only help if you run them. Integrate dbt tests into CI and run them before merging changes to main branches.

- Performance surprises: Transformations can be expensive. Monitor query costs, use materializations (incremental, snapshots), and profile queries for hot spots.

Best practices and patterns

- Small, well-tested models: Prefer many small dbt models over a few massive queries. Small models are easier to test and maintain.

- Idempotency: Ensure transformation jobs can run multiple times without corrupting results. This is particularly important when Airflow retries tasks.

- Incremental builds: Use incremental materializations for large tables to control cost and speed.

- Document models: Use dbt docs or Dataform descriptions so downstream users understand what each dataset represents.

Trends and the future of transformation tooling

A few trends are shaping how teams approach transformation:

- Warehouse-native tools win for speed: As warehouses gain compute and features, in-warehouse transformations (dbt, Dataform) reduce data movement and latency.

- Tighter integration with orchestration: Airflow and managed schedulers are increasingly orchestrating dbt/Dataform runs, offering transactional workflows across systems.

- Data contracts and tests: Automated tests and contractual guarantees between producers and consumers are becoming standard in mature teams.

- Metadata-first operations: Lineage, observability, and cost attribution tools are integrated into pipelines to help ops teams manage scale and budget.

When to pick each tool

- Pick dbt if you want a mature, SQL-first transformation framework with strong community packages, tests, and documentation features. It’s the go-to when you want reproducible, versioned models and developer-friendly macros.

- Pick Dataform if you’re heavily invested in BigQuery and prefer an integrated, warehouse-native developer experience with streamlined deployment inside Google Cloud.

- Pick Airflow if your workflows span many systems-APIs, cloud functions, ML training, and ETL processes-and you need a flexible DAG-based orchestrator to manage retries, backfills, and complex dependencies.

FAQ

What does data transformation mean?

Data transformation is the process of converting raw data into a structured, consistent format suitable for analysis, reporting, or machine learning. It includes cleaning (removing duplicates, handling nulls), standardizing formats, aggregating records, and applying business rules so that consumers can reliably use the data.

What is an example of data transformation in real life?

Consider an e-commerce company: raw order events show up with different timestamp formats, product codes, and customer IDs. Transformation combines these events into a clean orders table with standardized timestamps, resolved product names, calculated lifetime value, and flags for fraud or returns. That orders table then feeds dashboards and recommendation models.

What are the steps of data transformation?

Typical steps include extraction (getting raw records), cleaning (deduplication and standardization), enrichment (joining reference data), aggregation (summaries for reporting), validation (tests and checks), and loading (writing transformed data to a destination). Tools like dbt or Dataform focus on the cleaning/enrichment/aggregation/validation steps inside the warehouse.

What is data transformation in ETL?

In ETL (Extract, Transform, Load), transformation is the middle step where extracted data is converted to the desired structure and quality before loading into the target system. Modern variations often invert this pattern to ELT (Extract, Load, Transform) where data is loaded into the warehouse first and transformed there—this is where dbt and Dataform excel.

Why would you transform data?

Transforming data makes it accurate, understandable, and usable. It turns inconsistent, noisy inputs into trusted datasets that support analytics, reporting, and ML. In short: transformed data saves time, reduces errors, and enables reliable business decisions.

Final thoughts

dbt, Dataform, and Airflow each solve different problems in the transformation lifecycle. dbt and Dataform help you write, test, and version transformations inside the warehouse; Airflow orchestrates the wider workflow. Use them together when appropriate: write reliable models with dbt or Dataform, and let Airflow handle scheduling, retries, and cross-system dependencies. With these patterns in place-automated tests, documentation, lineage, and observability-your data will stop being a mysterious treasure map and start being a reliable roadmap for decision-making.

Read more: Latest Insights – for more articles and case studies about building resilient data and analytics systems.