Deciding between batch and stream processing is like choosing between a slow-cooked Sunday roast and a speedy breakfast smoothie — both feed you, but one is designed for depth and the other for immediacy. In data-driven organizations, the choice affects latency, cost, infrastructure, and ultimately how quickly you can act on insights. This article walks through the core differences, real-world use cases, architecture considerations, and practical tips to help you optimize data workflows for business impact.

Why this matters

Data is the engine behind decisions — whether that’s adjusting inventory, preventing fraud, or serving personalized content. Batch processing is built for exhaustive, high-volume work that runs on a schedule; stream processing is for continuous, low-latency insights. Picking the wrong approach can slow innovations, inflate costs, or make your analytics irrelevant by the time results arrive. Understanding both lets you match the right tool to the right job and design systems that are both fast and reliable.

Read more: Data Engineering for AI – learn why solid data infrastructure is the foundation for any processing choice.Core differences at a glance

Think of batch vs stream along a few dimensions:

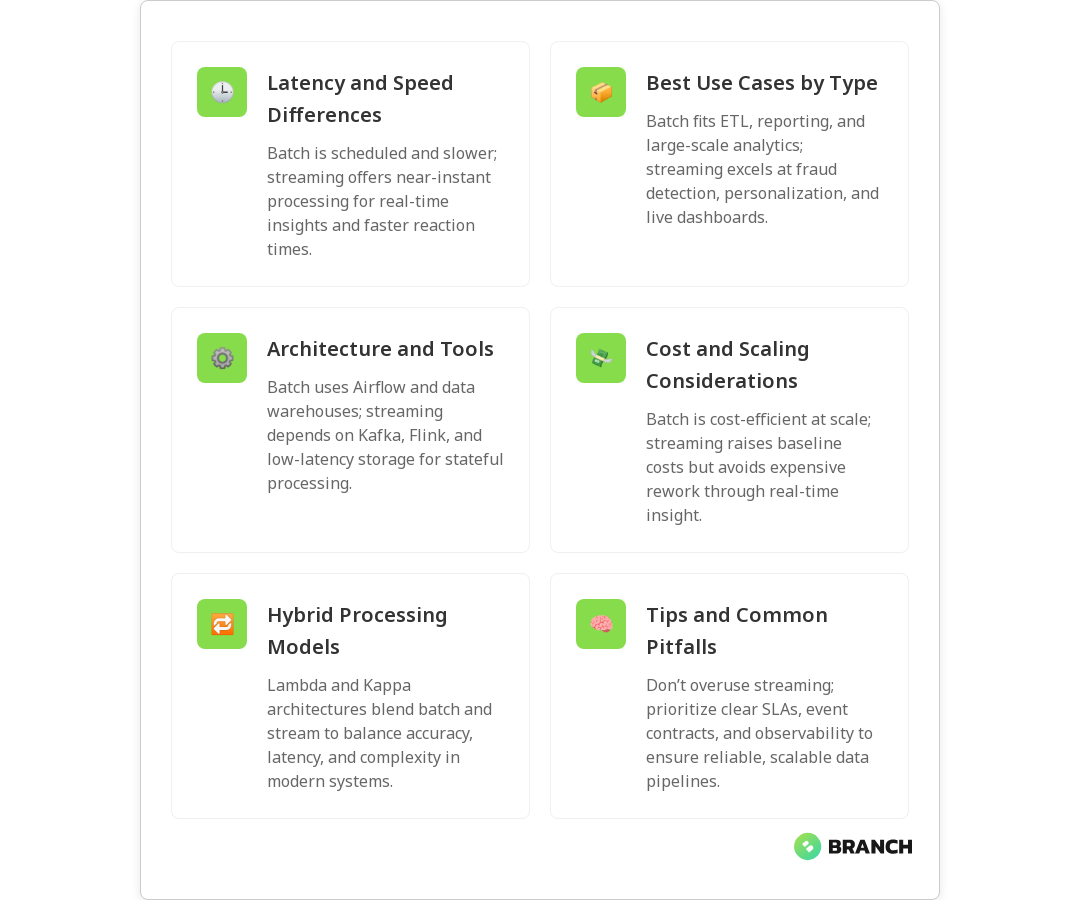

- Latency: Batch runs on a schedule (minutes to hours), while streaming processes events as they arrive (milliseconds to seconds).

- Throughput: Batch can efficiently process massive volumes in bulk; streaming is optimized for continuous flow and consistent throughput over time.

- Complexity: Streaming often requires more complex architecture (state management, windowing, handling late arrivals) than batch jobs.

- Use cases: Batch is great for ETL, historical analytics, and reporting; streaming shines for monitoring, fraud detection, personalization, and operational dashboards.

For a practical comparison and decision checklist, see a clear walk-through from DataCamp on when to use each approach (DataCamp overview).

When to choose batch processing

Batch processing is the reliable workhorse. Choose it when:

- You can tolerate latency and prefer processing large windows of data at once.

- Historical accuracy and repeatability matter (monthly financial closes, complex aggregations, machine learning model training).

- Cost per unit of work matters — batch jobs often compress overhead across many records and can be more cost-effective for huge datasets.

- Your data arrives in predictable bursts or schedules (e.g., daily logs, nightly ETL).

Common examples include billing runs, nightly data warehouses updates, and long-run ML model retraining. In many enterprises, batch remains the backbone for heavy-duty analytics because it’s simple to reason about and easier to test.

When to choose stream processing

Stream processing is the adrenaline shot for modern data systems. Choose streaming when:

- Near real-time decisions are critical (fraud alerts, live personalization, anomaly detection).

- Data arrives continuously and you need continuous results rather than periodic summaries.

- Operational monitoring, A/B testing feedback loops, or event-driven services rely on up-to-the-second information.

Implementing streaming requires attention to out-of-order events, late-arriving data, and stateful computations. Databricks’ documentation lays out key trade-offs like stateless vs stateful processing and how to manage late arrivals in streaming systems (Databricks docs).

Architecture and tooling — what changes under the hood

Batch architectures typically use orchestrators (like Airflow), scheduled compute clusters, and ELT pipelines feeding a data warehouse or lake. Streaming architectures use event brokers (Kafka, Kinesis), stream processors (Flink, Spark Structured Streaming), and low-latency stores for state.

Key considerations:

- Stateful processing: Streaming frameworks must manage in-memory or persistent state for aggregations and joins across time windows.

- Fault tolerance: Exactly-once semantics are harder but increasingly available in streaming stacks.

- Operational complexity: Streaming teams often need more specialized skills (observability for lag, backpressure handling, and recovery patterns).

Hybrid approaches: the best of both worlds

Most mature data platforms aren’t strictly batch or strictly streaming. Hybrid models combine immediate streaming for low-latency needs with batch for deep historical processing. Two common patterns:

- Lambda architecture: Streams handle real-time views, while a batch layer recomputes accurate historical results. This gives quick approximations and eventual correctness, but it can be operationally heavy.

- Kappa architecture: Uses a streaming-first approach where reprocessing is handled by replaying the event log; simpler operationally if the streaming stack supports it well.

Prophecy outlines how architects weigh these models and why many teams choose hybrid routes to balance correctness, latency, and complexity (Prophecy discussion).

Performance, cost, and scaling

Cost profiles differ. Batch jobs can be scheduled to run when resources are cheap (off-peak), and they can amortize startup costs over huge workloads. Streaming requires always-on infrastructure or autoscaling that reacts rapidly to load, which can increase baseline spend. However, streaming can reduce downstream cost by preventing expensive rework (e.g., catching issues early).

Scaling considerations:

- Horizontal scaling: Both models scale horizontally, but streaming systems often need careful partitioning strategies to avoid skew and hot keys.

- Latency vs cost trade-offs: Pushing for sub-second responses may require different hardware, caching, and operational overhead.

- Reprocessing: Batch makes reprocessing simple (rerun the job); streaming needs event replay and idempotency patterns to avoid duplication or gaps.

Implementation challenges and practical tips

Common pitfalls teams run into:

- Over-specifying streaming: Not every analytics problem needs real-time answers. Streaming everything increases complexity and cost.

- Ignoring data quality: Both batch and streaming rely on reliable schemas and validation. Streaming adds the challenge of validating data as it arrives.

- Under-investing in observability: Monitoring throughput, lag, and state sizes is essential for stable streaming systems.

Practical implementation tips:

- Start with clear SLAs for latency and correctness. The SLA should drive design choices.

- Use event-driven design — define clear event contracts and versioning plans for producers and consumers.

- Build replayability: keep an immutable event log so you can reprocess if needed.

- Invest in testing: unit tests for transformations, integration tests for end-to-end flows, and chaos tests for failure modes.

Trends and what to watch

Streaming capabilities are improving with better state stores, managed services, and libraries that provide stronger guarantees. Atlan and Monte Carlo discuss how streaming is increasingly used for operational monitoring and immediate business responses, while batch remains central to deep analytics and planning (Atlan perspective, Monte Carlo analysis).

Look for:

- More managed streaming offerings that reduce operational overhead.

- Better support for exactly-once semantics and stateful stream processing.

- Tighter integration between streaming and data warehouses to blur the lines between real-time and batch analytics.

Making the decision: checklist

- Define the business question and maximum acceptable latency.

- Estimate data volume and burstiness to understand cost implications.

- Assess team skills: do you have streaming expertise or prefer simpler batch operations?

- Decide on tolerance for inconsistency vs the need for immediate decisions.

- Plan for observability, replayability, and schema governance from day one.

When in doubt, build a small, focused proof-of-concept. It’s cheaper to learn on a limited scale than to refactor an entire platform later.

FAQ

What is data processing?

Data processing is the set of operations applied to raw data to transform it into meaningful information. This includes collection, cleaning, transformation, aggregation, analysis, and storage. The output supports reporting, decision-making, machine learning, or other downstream uses.

What are the three methods of data processing?

The three commonly referenced methods are batch processing (processing data in scheduled groups), real-time or stream processing (processing data continuously as it arrives), and interactive processing (ad-hoc queries and analytics). Each method serves different latency, cost, and workload characteristics.

What is an example of data processing?

An example is an overnight ETL job that ingests logs, cleans and aggregates them, and loads summarized results into a data warehouse for next-morning reports. Another example is a fraud detection service that processes credit-card transactions in real time to block suspicious charges.

What are the four types of data processing?

Depending on how categories are defined, you might see four types described as batch processing, real-time/stream processing, interactive processing, and distributed processing. The fourth category emphasizes scaling across many machines to handle large datasets or high throughput.

What are the four different types of data processing activities?

Commonly identified activities include data collection (ingest), data validation and cleaning, data transformation and aggregation, and data storage and delivery (exporting results to dashboards, models, or downstream systems). These activities exist across batch and stream workflows, though their timing differs.

Choosing between batch and stream processing isn’t an either/or decision for most organizations — it’s about matching the right tool to the right business need, then building the observability and governance that make those tools reliable. When you get that mix right, your data becomes not just an archive but a dependable decision engine. And if you ever want a hand designing that engine, you know where to find us — we like coffee, clean data, and a good challenge.