Large language models (LLMs) have gone from research curiosities to business-critical tools almost overnight. As companies race to build chatbots, assistants, and content engines, the operational question becomes: how do you manage these powerful but complex systems reliably and responsibly? This article explains LLMOps — the emerging practices and tooling tailored for LLMs — why it matters, how it differs from traditional MLOps, and practical steps your team can take to deploy LLMs at scale.

Why LLMOps matters now

LLMs bring new capabilities — fluent generation, long-form reasoning, and multimodal inputs — but they also introduce unique operational challenges. Model sizes, latency sensitivity, prompt drift, safety risks, and costly fine-tuning all mean the old MLOps playbook needs an upgrade. Organizations that treat LLMs like smaller machine learning models risk outages, hallucinations, privacy breaches, and ballooning cloud bills.

LLMOps is the discipline that stitches together lifecycle automation, monitoring, governance, and infrastructure optimization specifically for LLMs. For a solid overview of LLM-specific lifecycle automation and best practices, see the practical guide from Red Hat.

LLMOps vs. MLOps: what’s really different?

On the surface, both LLMOps and MLOps cover data, training, deployment, and monitoring. The differences show up when you dig into the details:

- Model interaction: LLMs are often interacted with via prompts and embeddings rather than fixed feature pipelines. Managing prompt engineering and prompt versioning is unique to LLMOps.

- Cost & scale: LLM inference and fine-tuning can be orders of magnitude more expensive than traditional models, pushing teams to optimize for caching, batching, and model selection.

- Observability: Instead of only numeric metrics, LLMOps needs behavioral monitoring — e.g., hallucination rates, toxic output, and alignment regressions.

- Governance & safety: Human-in-the-loop moderation, red-teaming, and content filters are first-class concerns, not afterthoughts.

For a side-by-side comparison and guidance on operational best practices tailored to LLMs, Google Cloud’s explainer on the LLMOps lifecycle is a useful resource: What is LLMOps.

Key aspects of LLMOps

LLMOps pulls together a set of practices that support safe, reliable, and cost-effective LLM production systems. Some of the core aspects include:

- Prompt and instruction management: Versioning prompts and templates, A/B testing phrasing, and capturing contextual signals used at inference time.

- Data curation for fine-tuning and retrieval: Building clean, representative datasets for supervised fine-tuning and retrieval-augmented generation (RAG) indexing.

- Model lifecycle automation: Pipelines for fine-tuning, evaluation, deployment, and rollback specific to large models.

- Observability and metrics: Monitoring latency, cost per request, content quality metrics (e.g., hallucination rate), and user satisfaction signals.

- Infrastructure orchestration: Specialized hardware management (GPUs/TPUs), model sharding, and cost-aware serving strategies.

- Safety, governance, and compliance: Prompt redaction, PII detection, access controls, and audit trails for model outputs.

Wandb’s article on understanding LLMOps provides a practical look at development and deployment tools tailored for LLMs and how LLMOps extends MLOps practices in real projects: Understanding LLMOps.

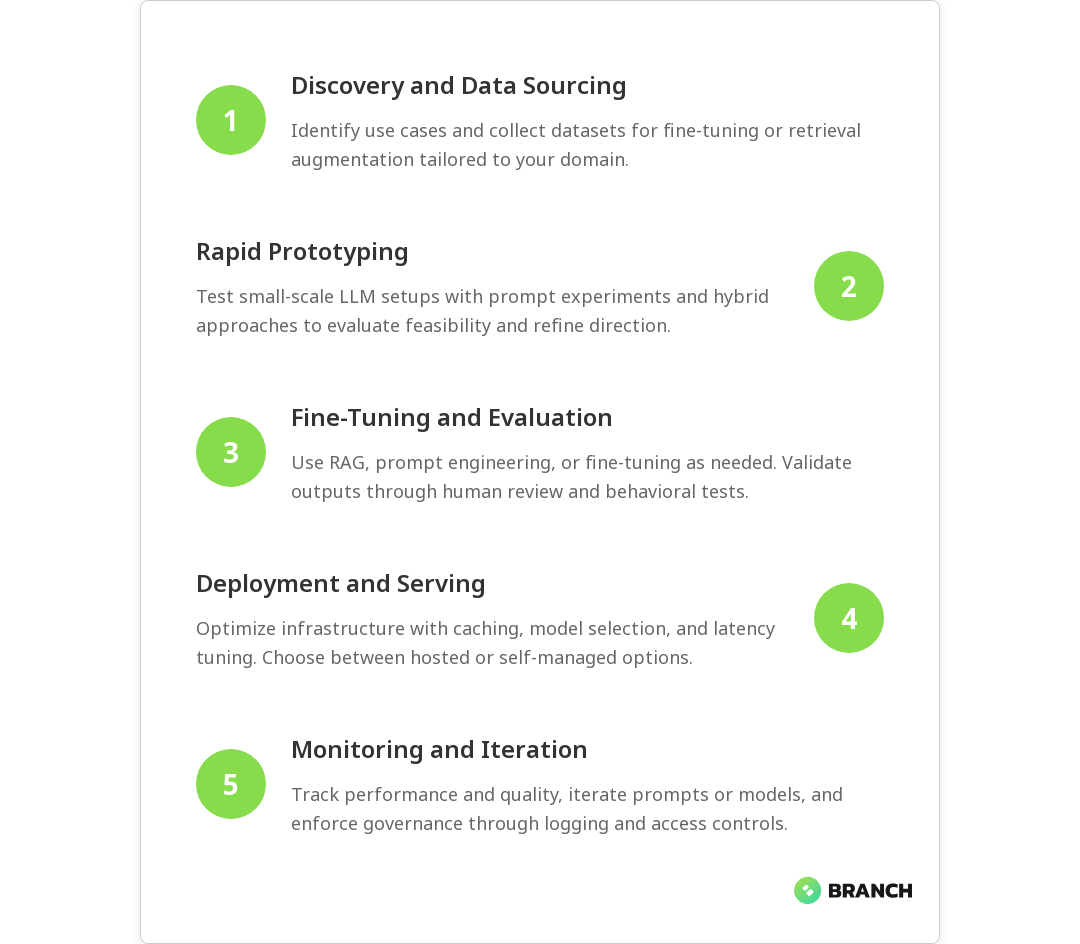

LLMOps lifecycle: practical stages

The LLMOps lifecycle is similar to MLOps in shape but different in content. A practical lifecycle might look like this:

- Discovery & sourcing: Identify use cases and collect domain-specific datasets and knowledge sources for retrieval augmentation.

- Prototyping: Rapidly iterate with small-scale tests, prompt experiments, and hybrid approaches (e.g., API + local cache).

- Fine-tuning & evaluation: Fine-tune when necessary; otherwise focus on RAG and prompt engineering. Use human evaluation and automated behavioral tests.

- Deployment & serving: Choose between hosted APIs, managed services, or self-hosting. Implement caching, model selection, and latency optimization.

- Monitoring & feedback: Track both system performance and content quality. Feed user feedback into retraining or prompt adjustments.

- Governance & iteration: Maintain access controls, audit logs, and safety checks. Iterate based on business needs and risk assessments.

Red Hat emphasizes lifecycle automation and continuous iteration for LLMs — automating as much of this sequence as possible reduces human error and improves reliability: LLMOps lifecycle automation.

Strategies to run LLMs effectively in production

Operationalizing LLMs requires a mix of engineering, data work, and governance. Here are practical strategies to adopt:

- Hybrid inference architecture: Use a mix of smaller, cheaper specialized models for routine tasks and larger models when high quality or deeper reasoning is needed. This reduces cost and improves responsiveness.

- Retrieval-augmented generation (RAG): Augment LLM output with vetted knowledge stores. RAG often delivers safer, more accurate responses than blind generation and reduces model fine-tuning needs.

- Prompt testing and canary rollouts: Treat prompt changes like code changes — test and roll out gradually while monitoring key behavioral metrics.

- Human-in-the-loop for safety: Route high-risk or ambiguous outputs for human review, especially in regulated domains like healthcare or finance.

- Cost observability: Track per-request compute and storage costs; use autoscaling, batching, and request prioritization to control spend.

PagerDuty’s guide to LLMOps highlights governance frameworks and operational performance optimization for running LLMs reliably, which is helpful when designing incident and escalation plans: LLMOps governance.

Challenges you’ll face (and how to approach them)

LLM projects can fail for technical and organizational reasons. Here are common pitfalls and how to mitigate them:

- Hallucinations and factual errors: Mitigation: RAG, grounding, and post-generation verification checks.

- Data privacy and compliance: Mitigation: PII detection, prompt redaction, and secure retrieval stores with access controls.

- Model drift and prompt decay: Mitigation: Continuous evaluation, user feedback loops, and scheduled retraining or prompt updates.

- Cost overruns: Mitigation: Mixed model sizes, caching common responses, and careful autoscaling rules.

- Tooling gaps: Mitigation: Combine MLOps platforms with LLM-specific tooling (prompt stores, RAG orchestrators) and invest in custom automation when needed.

Many teams find that evolving their CI/CD and monitoring pipelines to incorporate behavioral tests and safety checks is the most productive early investment. CircleCI’s write-up on the evolution from MLOps to LLMOps discusses orchestration and governance considerations that are useful when planning automation: From MLOps to LLMOps.

Emerging trends and tooling

The LLMOps ecosystem is maturing fast. Expect developments in:

- Prompt stores and version control: Tools to store, diff, and roll back prompts and injection patterns.

- Behavioral testing frameworks: Suites that test for hallucinations, bias, toxicity, and alignment drift.

- Model orchestration platforms: Systems that select models dynamically based on cost, latency, and requested capability.

- Hybrid hosting options: More flexible choices between cloud-hosted models and on-prem/self-hosted deployments for compliance-sensitive workloads.

Google Cloud’s material on LLMOps emphasizes real-time performance monitoring and data management, both of which are increasingly important as LLMs move into live user-facing systems: Real-time LLMOps guidance.

Best practices checklist

- Version prompts, embeddings, and retrieval indexes alongside code and models.

- Use RAG to ground responses and reduce hallucinations before committing to fine-tuning.

- Instrument behavioral metrics (hallucination rate, toxicity, customer satisfaction) and tie them into alerting.

- Implement gradual rollouts and canaries for prompt and model changes.

- Include human review for high-risk outputs and maintain audit logs for compliance.

- Optimize serving architecture for cost and latency: caching, sharding, and mixed-model strategies.

FAQ

What does LLMOps stand for?

LLMOps means Large Language Model Operations. It refers to practices, tooling, and processes for deploying and managing LLMs in production.

What is the difference between LLMOps and MLOps?

LLMOps extends MLOps to cover prompt management, retrieval augmentation, behavioral monitoring, and governance tailored for large language models.

What are the key aspects of LLMOps?

Key aspects include prompt versioning, RAG data curation, lifecycle automation, cost and latency optimization, and safety/governance frameworks.

What is the life cycle of LLMOps?

The LLMOps lifecycle spans discovery, prototyping, fine-tuning or retrieval design, deployment, monitoring, and governance with automation at each step.

What are the best practices for LLMOps?

Best practices include versioning prompts, using RAG, monitoring behavioral metrics, canary rollouts, human review for risky outputs, and cost-aware serving.

Closing thoughts

LLMOps is not a buzzword — it’s a pragmatic evolution that recognizes LLMs are different beasts than traditional models. Investing in LLMOps practices early will make your LLM projects more reliable, safer, and more cost-effective. Start with strong data pipelines, versioned prompts, RAG strategies, and behavioral monitoring; then iterate toward automation and governance. If you’re building business systems with LLMs, LLMOps is the discipline that turns experimental demos into dependable products.

For teams ready to go beyond experimentation, combining solid data engineering, responsible AI development practices, and cloud-native infrastructure will accelerate success. If you want help designing that roadmap, Branch Boston offers services that cover data engineering, AI development, and cloud solutions tailored to enterprise needs.